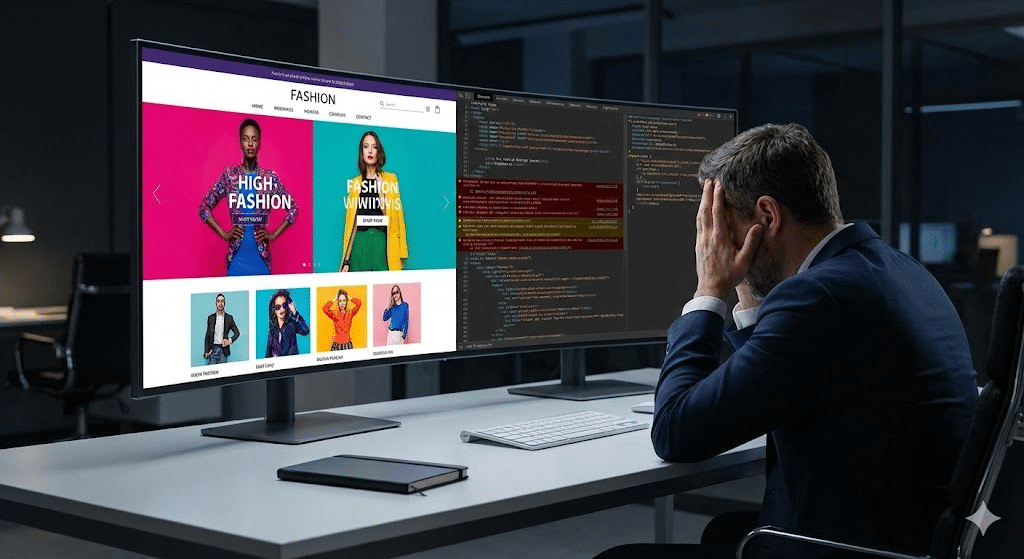

It is a remarkably common and incredibly frustrating scenario for modern business owners. You have just invested a significant portion of your annual marketing budget into a brand-new, custom-designed website. The aesthetic is flawless, the branding is perfectly aligned, and the user interface features cutting-edge animations. You launch the site with high expectations, anticipating a surge in traffic and conversions.

Instead, the opposite occurs.

Weeks turn into months, and your organic traffic flatlines. Your legacy pages, which previously held page-one positions on Google, have mysteriously vanished from the Search Engine Results Pages (SERPs). You are left asking a painful question: why my website isn’t ranking?

The unfortunate reality is that a beautiful digital storefront is entirely useless if search engines cannot find, read, or understand it. This dilemma highlights the fundamental conflict of web design vs SEO. While talented web designers focus on visual hierarchy, brand identity, and human user experience, they frequently overlook the invisible, foundational code that dictates how search engine crawlers interact with your site.

To recover your traffic and ensure your investment generates a return, you must look beneath the surface. Here is an executive breakdown of the critical technical elements your web designer likely missed, and why a comprehensive technical SEO audit is the only path to recovery.

The Disconnect: Web Design vs. SEO

Search engines like Google do not “see” your website the way a human does. They do not appreciate the nuances of your color palette or the elegance of your typography. Googlebot, the crawler responsible for reading your site, reads raw code.

When a website is built purely for aesthetics, developers often utilize heavy scripts, complex frameworks, and convoluted code structures to achieve visual effects. While these elements look impressive to a user, they act as digital brick walls to search engine crawlers. If Google cannot efficiently crawl and index your site, it simply will not rank it. Period.

Resolving this requires more than basic keyword insertion; it demands deep, structural intervention.

1. JavaScript SEO Issues and Rendering Roadblocks

One of the most frequent culprits behind a post-launch traffic drop involves modern web development frameworks. Today, many aesthetically pleasing websites are built using JavaScript frameworks like React, Angular, or Vue.js. These frameworks allow for dynamic, app-like experiences, but they introduce severe JavaScript SEO issues.

By default, many of these frameworks rely on Client-Side Rendering (CSR). This means the user’s browser does the heavy lifting to construct the page. However, when Googlebot arrives at a CSR website, it initially only sees a blank page with a few lines of code requesting JavaScript to be executed. If Google lacks the resources or time to render that JavaScript—which happens frequently—your content is essentially invisible to the search engine.

The Fix: A technical SEO audit will identify rendering bottlenecks. The solution often involves implementing Server-Side Rendering (SSR) or Dynamic Rendering, ensuring that when Googlebot visits your site, it is served a fully rendered, easy-to-read HTML version of your content, allowing your pages to be indexed immediately.

2. Site Architecture Optimization and Crawl Depth

A beautifully designed website often features a streamlined, minimalist navigation menu. While this looks clean, it can inadvertently bury your most important service or product pages deep within the website’s structure.

Search engines rely on internal links to discover pages and understand their relative importance. This concept is often referred to as “link equity.” If a crawler has to click five or six times from your homepage to find a critical service page, it assumes that page is unimportant. Furthermore, it drains your “crawl budget”—the limited amount of time and resources Google allocates to crawling your site.

The Fix: Proper site architecture optimization requires a logical, “flat” hierarchy. A technical SEO audit will map your internal linking structure to ensure that no critical page is more than three clicks away from the homepage. This guarantees efficient crawling and evenly distributes ranking power across your entire domain.

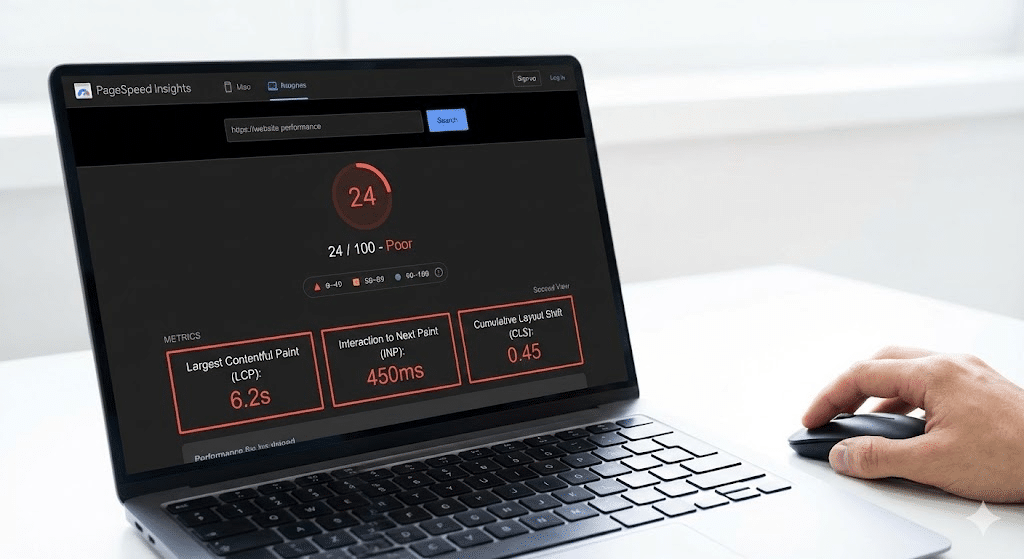

3. Fixing Core Web Vitals: The Speed and Stability Mandate

In recent years, Google has made user experience a direct ranking factor through a set of metrics known as Core Web Vitals. Web designers often prioritize high-resolution images, background videos, and elaborate loading animations. Unfortunately, these large files drastically slow down your website.

A beautiful site that takes five seconds to load will be penalized by Google. When fixing Core Web Vitals, a technical SEO team looks at three specific metrics:

- Largest Contentful Paint (LCP): How long does it take for the largest element on the screen (usually a hero image or text block) to render? (Target: Under 2.5 seconds).

- Interaction to Next Paint (INP): How quickly does the page respond when a user clicks a button or link? (Target: Under 200 milliseconds).

- Cumulative Layout Shift (CLS): Does the content visually jump around as the page loads, causing users to accidentally click the wrong thing? (Target: Less than 0.1).

The Fix: Optimizing these metrics requires IT-level server management and code refinement. It involves minimizing CSS and JavaScript, implementing aggressive browser caching, compressing images into next-generation formats (like WebP), and utilizing Content Delivery Networks (CDNs).

4. The Hidden Meta and Canonical Catastrophes

When a new website is built, it is typically hosted on a private “staging” server. To prevent Google from indexing this unfinished, duplicate site, developers place a noindex tag in the site’s code, or a Disallow: / directive in the robots.txt file.

Astonishingly, one of the most common reasons a new website fails to rank is that the development team simply forgot to remove these tags when migrating the site to the live domain. You could have the greatest content in the world, but if a line of code is explicitly telling Google to ignore you, your traffic will remain at zero.

Furthermore, designers often overlook Canonical Tags—code that tells Google which version of a page is the “master” copy. Without proper canonicalization, e-commerce filters and tracking URLs can create thousands of duplicate pages, confusing search engines and diluting your ranking power.

The Solution: Your Technical SEO Audit Checklist

If your organic traffic has flatlined, visual tweaks will not save you. You need a structural evaluation. A comprehensive technical SEO audit checklist should, at a minimum, include the following:

- Indexability and Crawlability Check: Reviewing the robots.txt file, XML sitemaps, and checking for accidental

noindextags. - Rendering Analysis: Using tools to see exactly how Googlebot renders your JavaScript-heavy pages versus how a human sees them.

- Core Web Vitals Assessment: Auditing server response times, script execution times, and visual stability across both mobile and desktop.

- Site Architecture Review: Analyzing crawl depth, internal linking structures, and identifying “orphan pages” (pages with no links pointing to them).

- Redirect Mapping: Ensuring all old URLs from your previous website were properly 301-redirected to the new URLs to preserve historical ranking power.

- Duplicate Content & Canonicalization Check: Ensuring dynamic URLs and parameters are not confusing search engines.

Why You Need a Hybrid Agency

Resolving the conflict between web design and SEO requires a unique skill set. Pure creative agencies excel at design but lack the IT infrastructure knowledge to optimize server performance and complex code. Conversely, standard SEO freelancers may know the keywords but lack the developmental expertise to safely restructure a live website’s architecture.

At Elevated-Marketing.io, we bridge this gap. Because our expertise spans both digital marketing and IT managed services, we do not just identify why your website isn’t ranking—we possess the technical capabilities to actually fix the underlying code, optimize your server environment, and restore your organic traffic without breaking your design.

A beautiful website should be an engine for business growth, not a liability.

Would you like me to run a preliminary audit on a specific URL from your website to identify any immediate technical roadblocks?